How To Spot A Garbage Poll In Five Easy Steps

Spoiler Alert: Most of them are junk.

How'd you all like those polls? Nice, huh? But how can you tell if the data is good or garbage? Let's explain it listicle style in Five Easy Steps.

Step 1: If It Seems Too Good to Be True, It Probably Is

This one probably seems obvious. If you stumble across a poll on FiveThirtyEight, Real Clear Politics, or Twitter that seems like an outlier, take it with a major grain of salt. If, for example, someone tries to tell you that Biden is leading in Indiana, or Trump is up in Oregon, they're probably full of shit.

Step 2: Internals Are Usually Junk

Any campaign that can afford it will commission a poll to know where the candidate stands. Often campaigns choose to keep this information private and just use it to adjust their strategy. They'll usually hire a pollster affiliated with their party to run the survey, and only release the result if it's good news for the candidate. In a competitive race, the candidate has a strong incentive to make those "internal polls" look as good as possible. They're hardly going to release a result that shows them dead in the water — they'd never raise another dollar.

Not all internal polls are crap. But most have a strong lean toward the candidate who paid the pollster, which is why poll aggregators, like RCP, will label the polls with an R or D to indicate who paid the bill. The only internal pollster with a good track record is Public Policy Polling, aka PPP. The rest of them are probably bullshit.

Step 3: If a Poll Hides Its Methodology,

red card referee GIF by Univision Deportes Giphy

For casual poll readers, this may not make sense, so I'll show it visually. Say you're on FiveThirtyEight and you click on a poll at random. If that poll takes you to an article summarizing and jumping to conclusions based on the poll, but doesn't show you all the numbers, be cautious. Take for example this Hill/HarrisX poll done last month. There are no crosstabs available, just a link to an article whose underlying data is published nowhere else but The Hill, which just gave itself a clean bill of health after spending a year laundering John Solomon's anti-Biden conspiracies.

They've included this not very helpful table that you can neither download nor see the methodology. All you get are the topline results from one question, in a clunky chart. No one is paying money to conduct a one-question survey.

Notice that you can't see the whole chart at once? It makes it harder for people who might want to pick it apart by noting some odd findings. Do they really expect that Biden will win men, the candidates are tied among women, and Trump will win independents by eight percent? Come on.

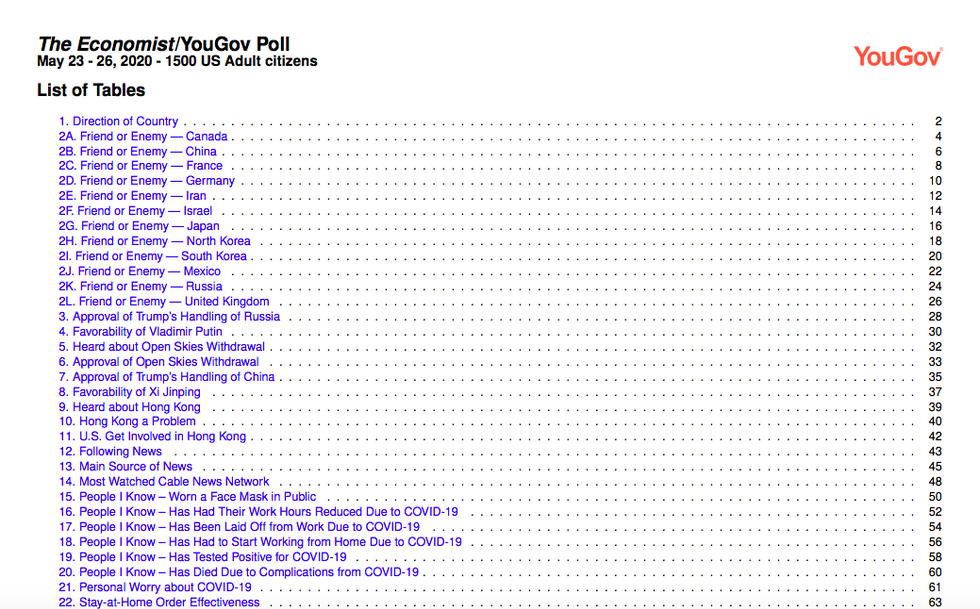

YouGov, show them how it's done! Here's the first of 321 pages of data from a recent poll. There are 121 questions, with detailed results for every one.

Which is why YouGov, though not perfect, gets its polls cited regularly, whereas the Hill/HarrisX never does.

Step 4: Who is Getting Polled?

When pollsters conduct surveys, they often do three different surveys at a time. One group is adults generally, (A); one is registered voters, (RV); and one is likely voters, (LV). Sometimes the divergence between the three groups can give you information about which candidate is doing well at motivating turnout. But the "best" data comes from polls of likely voters only.

Step 5: No 100 Percent Landline or Cellphone/ NO FINANCIAL INCENTIVE

Finding people to take surveys is hard. Finding the right people to take surveys is harder.

In a perfect world, survey respondents would be half via cellphone and half through a landline. They'd reflect the demographics of the state's voters, and measure about a thousand people. But we don't live in a perfect world, so pollsters often need to weight results in order to match the demographics of a state. In the real world, this usually means overweighting the results of those making under $50,000. This is fine and generally increases the accuracy of the poll.

But if you see a poll that is all landline or all cellphone, it's wrong. Usually all-landline polls oversample older voters, whereas all-cellphone polls oversample younger voters.

Finally, if a pollster cannot get enough results, sometimes they will call a third party company and run the poll with their software. For example, Emerson Polling partnered with NexstarDigital , a company which "delivers powerful advertising and content monetization solutions to media brands and advertisers across U.S. national and local markets." If a company has partnered with a polling firm, disregard the results.

In the end, you kind of have to trust your gut. Voters don't typically swing drastically from election to election, and if a poll seems nuts, it probably is. Check out 538's pollster rankings for a cheat sheet. It can't tell you if a given poll is right, but it does show which pollsters have a decent track record.

[ FiveThirtyEight ]

Do your Amazon shopping through this link, because reasons .

Wonkette is ad-free. Help us pay this guy! And the others! Only if you want to!

Hoping the Predator drones will notice.

Preach Data Goon, Preach!